ParaView/Catalyst/Overview

Background

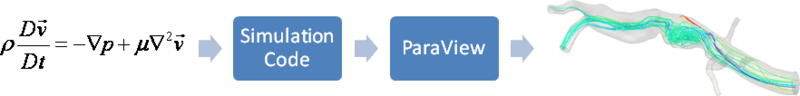

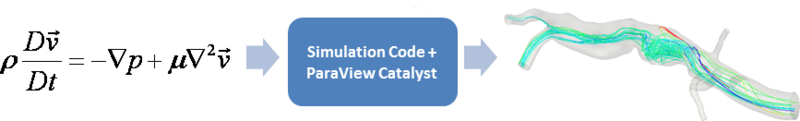

Several factors are driving the growth of simulations. Computational power of computer clusters is growing, while the price of individual computers is decreasing. Distributed computing techniques allow hundreds, or even thousands, of computer nodes to participate in a single simulation. The benefit of this computational power is that simulations are getting more accurate and useful for predicting complex phenomena. The downside to this growth is the enormous amounts of data that need to be saved and analyzed to determine the results of the simulation. The ability to generate data has outpaced our ability to save and analyze the data. This bottleneck is throttling our ability to benefit from our improved computing resources. Simulations save their states only very infrequently to minimize storage requirements. This coarse temporal sampling makes it difficult to notice some complex behavior. To get past this barrier, ParaView can now be easily used to integrate post-processing/visualization directly with simulation codes. This functionality is often referred to as co-processing, in situ processing or co-viz. This feature is available through ParaView Catalyst (previously called ParaView Co-Processing). The difference between workflows when using ParaView Catalyst can be seen in the figures below.

Technical Objectives

The main objective of the co-processing toolset is to integrate core data processing with the simulation to enable scalable data analysis, while also being simple to use for the analyst. The tool set has two main parts:

- An extensible and flexible co-processing library: The co-processing library was designed to be flexible enough to be embedded in various simulation codes with relative ease. This flexibility is critical, as a library that requires a lot of effort to embed cannot be successfully deployed in a large number of simulations. The co-processing library is also easily-extended so that users can deploy new analysis and visualization techniques to existing co-processing installations.

- Configuration tools for co-processing configuration: It is important for users to be able to configure the co-processor using graphical user interfaces that are part of their daily work-flow.

Note: All of this must be done for large data. The co-processing library will almost always be run on a distributed system. For the largest simulations, the visualization of extracts may also require a distributed system (i.e. a visualization cluster).

Overview

The toolset can be broken up into two distinct parts, the library part that links with the simulation code and a ParaView GUI plugin that can be used to create Python scripts that specify what should be output from the simulation code. Additionally, there are two roles for making co-processing work with a simulation code. The first is the developer that does the code integration. The developer needs to know the internals of the simulation code along with the co-processing library API and VTK objects used to represent grids and field data. The other is the user which needs to know how to create the scripts and/or specify the parameters for pre-created scripts in order to extract out the desired information from the simulation. Besides the information below, there are tutorials available.